More and more human tasks are being taken over by technology – what does that say about us?

The British anthropologist Richard Wrangham argues that we could only become truly ‘human’ once we were able to consume enough calories to fuel those bizarrely large brains of ours. With raw food, that’s simply not possible, he calculated. So only when we learned to cook could we become human.

Whether he’s entirely right or not, many aspects of meal preparation have increasingly been outsourced – to other people and to technology. Hardly anyone bakes their own bread anymore, almost no one grows their own grain or slaughters their own pig.

The growth in revenue for meal delivery services continues, and in the U.S. there are already apartments without kitchens. But that outsourcing doesn’t go on forever. In fact, the number of people who cook for themselves has risen since 2003, even in the U.S. And that’s wise: it’s demonstrably healthier, often cheaper, and usually tastier.

Telling stories

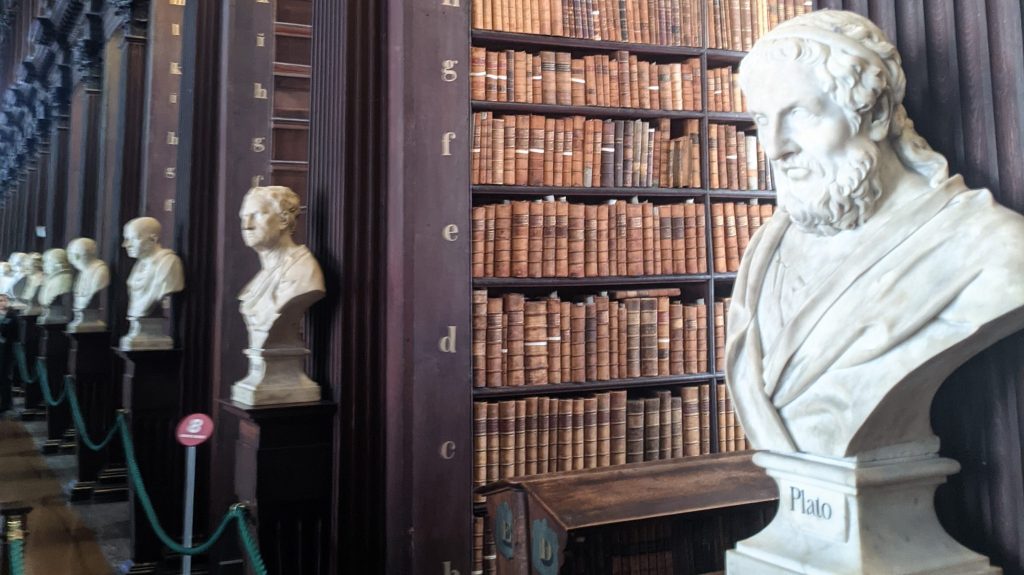

The other essential characteristic of humans is language: the ability to speak and listen. We’ve partly outsourced that skill to technology as well. Reading and writing allow you to ‘speak’ to people who aren’t here or who don’t even exist yet. Socrates, in Phaedrus, wasn’t very positive about that skill.

People become lazy; they seem wise but are not. Books don’t talk back.

“… you, who are the father of letters, have been led by your affection to ascribe to them a power the opposite of that which they really possess. For this invention will produce forgetfulness in the minds of those who learn to use it, because they will not practice their memory. Their trust in writing, produced by external characters which are no part of themselves, will discourage the use of their own memory within them. You have invented an elixir not of memory, but of reminding; and you offer your pupils the appearance of wisdom, not true wisdom, for they will read many things without instruction and will therefore seem to know many things, when they are for the most part ignorant and hard to get along with, since they are not wise, but only appear wise.” (historyofinformation.com)

We only need to change a few words in his rant and it reads like a contemporary critique of LLMs. Incidentally, Socrates did consider reading and writing useful for administrative purposes, such as recording laws or contracts. The debate wasn’t entirely settled: in 1476, the Parisian guild of writers destroyed the first printing press and managed to block its adoption for years.

Outsourcing Thinking

Outsourcing the making of food, or the remembering and telling of stories, is one thing. But outsourcing thinking—that hits very close to home. The psychotherapy chatbot Eliza could still be seen as a harmless (though interesting) joke. And in something as noble as chess, surely humans would always remain superior? “Hold my beer,” said Feng-hsiung Hsu, and built the chess computer Deep Blue, which defeated the reigning world champion (Garry Kasparov) in a thrilling match in 1997. The much more complex game of Go was conquered within 20 years as well.

Fun fact: both Deep Blue and AlphaGo have shown unexpected ‘superhuman'(?) behaviour

During one of the matches, at move 44, Deep Blue encountered a bug which caused it to enter an infinite loop. The program then chose a random move. Kasparov was so surprised that he wondered whether it was a mistake, or some super brilliant tactic that he failed to understand. It is said that this caused so much imbalance that he eventually lost, although he denies that. The AlphaGo match had a similar situation: move 78 was totally unexpected. Turned out that AlphaGo had found a tactic that no one else had found yet.

And now we have GenAI, which automates the formulation and interpretation of text. Are we going too far? Do we risk losing our humanity if we outsource this on a large scale?

Large Language Models—the engines behind AI chatbots—can compute with words: we’ve combined language and math. Math had already been outsourced earlier. When calculators were introduced, similar reactions arose: “They can’t do mental arithmetic anymore!” was the lament when a young cashier struggled with making change.

Are We Getting Dumber?

In the 1970s and 80s, there was debate about word processors and spell-checking – these were said to undermine critical thinking. The ability to easily delete or move text would lead to poorly structured writing. That may well have been true. For reading, writing, and math, the trend is certainly not encouraging.

For Generative AI, research at Cornell University has measured what they call “brain rot” and introduced the term “cognitive debt”: postponing deep thinking and accepting the output of a Generative AI assistant without question.

Whether the experiment is entirely convincing is debatable. Doing the same task with automation means obviously less mental effort. But with tools, you can tackle other (harder) tasks that require more thinking, as one interesting response points out. Using Excel to check change at the register is lazy (or excessive), but using Excel to calculate your mortgage is legitimate. Likewise, you could argue that with AI assistance, you’re not just doing the same thing faster – you’re taking on bigger, more complex, and more challenging jobs instead.

But if the tasks you perform with tools aren’t much harder, then their use certainly leads to a kind of “brain rot.” Navigation apps haven’t really made us take more difficult trips, so they weren’t strictly necessary. The result: map reading and asking for directions are now dying skills. And what about dating apps?

Shifting Acceptance

Even members of parliament are concerned: what if children stop Googling and only ask Chat? Well, 25 years ago, the worry was that children only Googled and no longer learned from real books. And 2,500 years ago, Socrates already had his doubts about those books.

The big question is whether AI is truly different. Is there still hope, after we outsourced bread-making to bakers, storytelling to books, and arithmetic to computers?

Stay Critical!

There’s a chance that AI will cause the end of humanity, and a chance that we’re heading toward a golden future – even the smartest professors don’t know. It will probably be somewhere in between. What do we outsource, and what don’t we?

It took a COVID pandemic to make bread-baking popular again. More stories are being told than ever – if you count Netflix. The calculator debate is settled: we introduced estimating so you can spot weird results caused by input errors.

AI chatbots keep changing. That’s inconvenient, because every update brings new kinds of hallucinations (“calculation errors”) that you have to learn to recognize. Society hasn’t yet had the chance to fully adapt to AI’s possibilities. Critical thinking remains essential – even in situations where we’re not dealing with AI-generated text.

In information processing, we often distinguish between data, information, knowledge, and wisdom. We’ve successfully outsourced handling data (calculators, books), and now we’ve outsourced handling information and knowledge (internet, chatbots). What remains is wisdom. That we must not outsource—so we can make the right choices. We can choose to maintain our skills: bake bread, tell a story, critically read a full article. When do you really want to make an effort? When do you use your humanity to achieve something? As long as the choice between doing it yourself and outsourcing exists—and we’ve learned to make those choices wisely—the end of humanity will be postponed for quite a while.

Plaats een reactie